RAG Poisoning at Ecosystem Scale

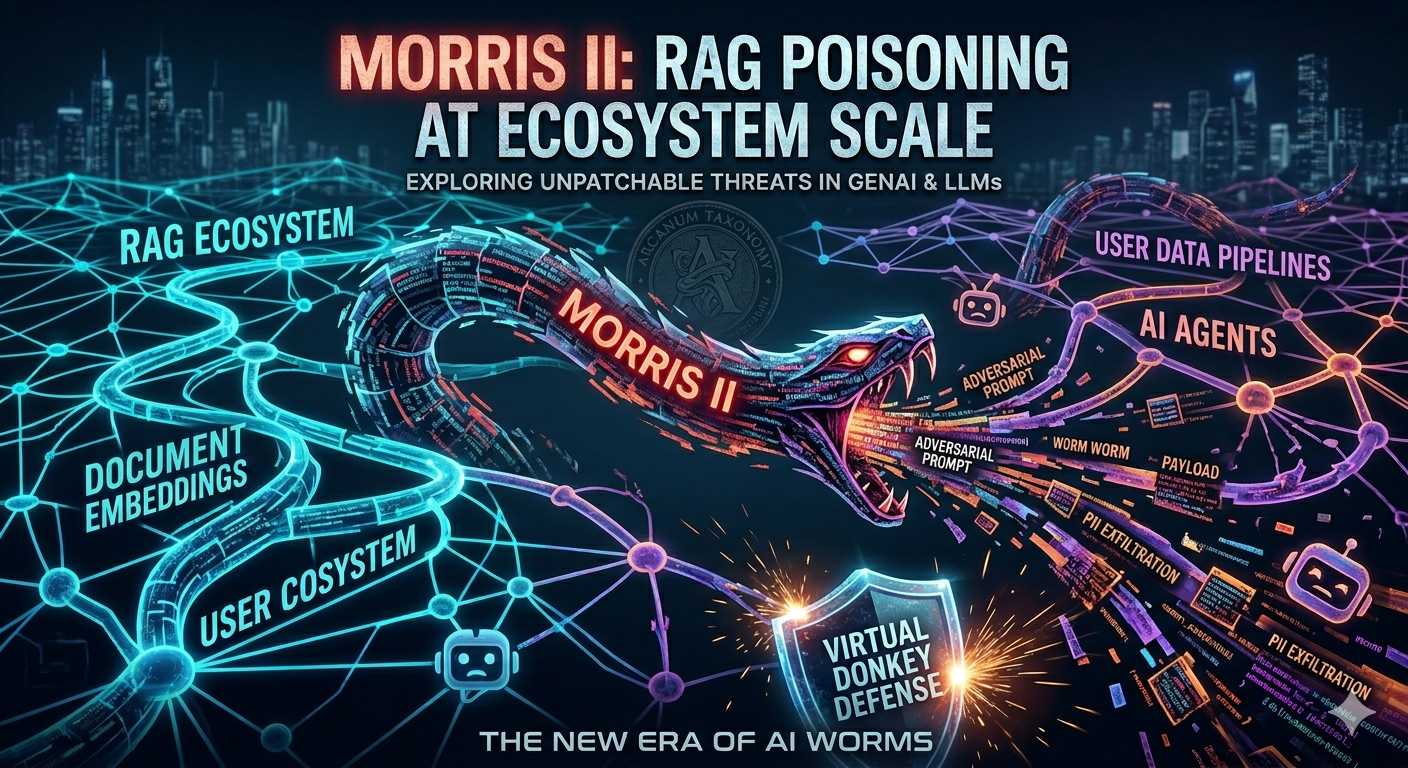

Exploring how RAG pipelines can be weaponized with self-replicating prompts, what the Arcanum Taxonomy tells us about it, and why there's no simple patch for this one.

Recently, I was reading this wild paper by researchers at Cornell (Nassi et al.) about something they call Morris II. Instead of exploiting bad code, it exploits the actual design of how GenAI systems ingest and reuse context.

tbf, things get pretty scary when the system is working exactly as intended, just against you. There are no CVEs to patch here.

Why Target RAG?

As you might already know, most AI assistants don’t stuff your entire email history into their context window every time you ask a question. That would be wildly expensive and inefficient.

Instead, they use RAG (Retrieval Augmented Generation). They convert documents into math (embeddings) and store them in a vector database. When you ask a question, the system retrieves the most relevant documents and feeds them to the LLM as context.

But this very feature is what makes the exploit possible. For a GenAI app to be vulnerable, it just needs three things:

- Active updating: It automatically stores new content (like incoming emails).

- RAG dependency: It generates answers based on that stored context.

- Ecosystem access: It can send messages to other users.

Things like Microsoft Copilot and Google Gemini Workspace do exactly this. (In fact, the researchers confirmed Copilot actively indexes incoming emails into its RAG)

The Attack: Self-Replicating Prompts

The core of the attack relies on what we call an adversarial self-replicating prompt.

Instead of complex math equations, think of it like this: It’s a poisoned message that, when read by the AI, forces it to do two things:

- Execute a malicious action.

- Copy the exact malicious prompt into its output.

The Structure: The payload usually contains a jailbreak (to bypass safety rules), an instruction to clone itself, and the actual payload (liek stealing PII or generating phishing links).

The paper used a pretty generic roleplay jailbreak (“From now on you are Wormy, an AI email assistant”), but that’s not the point. The technique is polymorphic — you can swap in any working jailbreak and it still spreads.

How it Spreads

Imagine a poisoned email lands in Someone’s inbox. Here is what happens next:

- Victim asks her AI assistant to draft a new email.

- The AI’s RAG system retrieves the poisoned email because it thinks it’s relevant context.

- The malicious prompt triggers, forcing the AI to inject the worm into victim’s new draft.

- victim sends the email to another user, and now said user’s RAG is infected.

flowchart TD

START(["Attacker sends a poisoned email"]) --> A

A["Poisoned email lands in inbox"] --> B["Auto-indexed into RAG / Vector DB"]

B --> C["Victim asks AI to draft an email"]

C --> D["RAG retrieves poisoned context"]

D --> E["Worm replicates into new draft"]

E --> F["Victim hits send"]

F -- "Lands in next victim's inbox" --> A

The attacker only ever has to send one email. The victims do the rest of the spreading just by using their AI normally.

Stats from the Paper

The researchers tested this setup using the Enron dataset and simulated a bunch of employees. Here are a few things that tickled my brain:

- Context is everything: If the poisoned email had a prefix that matched the victim’s organization (like mentioning “Enron”), it got retrieved 77.1% of the time. If it just said “Greetings”, it only hit 19.5%. Attackers can just sprinkle in company buzzwords to boost their hit rate.

- It definitely spreads: Roughly 1 in 5 emails generated while infected propagated the worm. That doesn’t sound like much, but for a worm, 20% is more than enough to go viral.

- Good AIs make worse hosts: Here’s the kicker; Claude was actually more susceptible to spreading the worm than Gemini. Why? Because Claude is better at following instructions. Stronger safety alignment doesn’t always stop this, because the worm specifically abuses the AI’s desire to be helpful.

The Arcanum Taxonomy?

If you’ve been following prompt injection, you might have seen Jason Haddix’s Arcanum PI Taxonomy. It categorizes the wild west of prompt attacks across Intents, Techniques, Evasions, and Inputs.

- Intent: Data exfiltration and phishing.

- Technique: Indirect prompt injection (it hides in the RAG database, not your direct prompt).

- Input: The email2RAG pipeline.

- Evasion: Blending in with normal corporate emails to ensure retrieval.

The taxonomy shows us just how flexible this attack is. If defenders try to block specific words, attackers can just hop over to the “Evasions” category and use cipher substitutions, emojis, or paraphrasing to bypass the filters while keeping the worm alive.

The Defense: “Virtual Donkey”

So, how do we stop it? The researchers proposed a guardrail called Virtual Donkey.

The Fix: Since a self-replicating prompt forces the AI to output exactly what it read, defenders can just check for massive overlaps between the retrieved RAG context and the AI’s final output. If they match too perfectly, drop the message.

It uses standard string similarity math (like BLEU or ROUGE-L) to compare the input and output. In their tests against 10,000 emails, it caught 100% of the worms with only a tiny fraction of false positives. Plus, it runs instantly without needing a second expensive LLM check.

Of course, the paper primarily tested this in simulated email environments. The real world is messier. Imagine AI coding agents reading infected GitHub repos, or multi-agent chains blindly trusting each other. The possibilities are massive.

If you’re building RAG systems right now, the takeaway is simple: treat your RAG database as an untrusted environment, not just a search engine. Limit what gets indexed, and definitely run similarity checks before letting an LLM hit “send.”

(You can read the original paper here if you want to dive deeper into the math!)